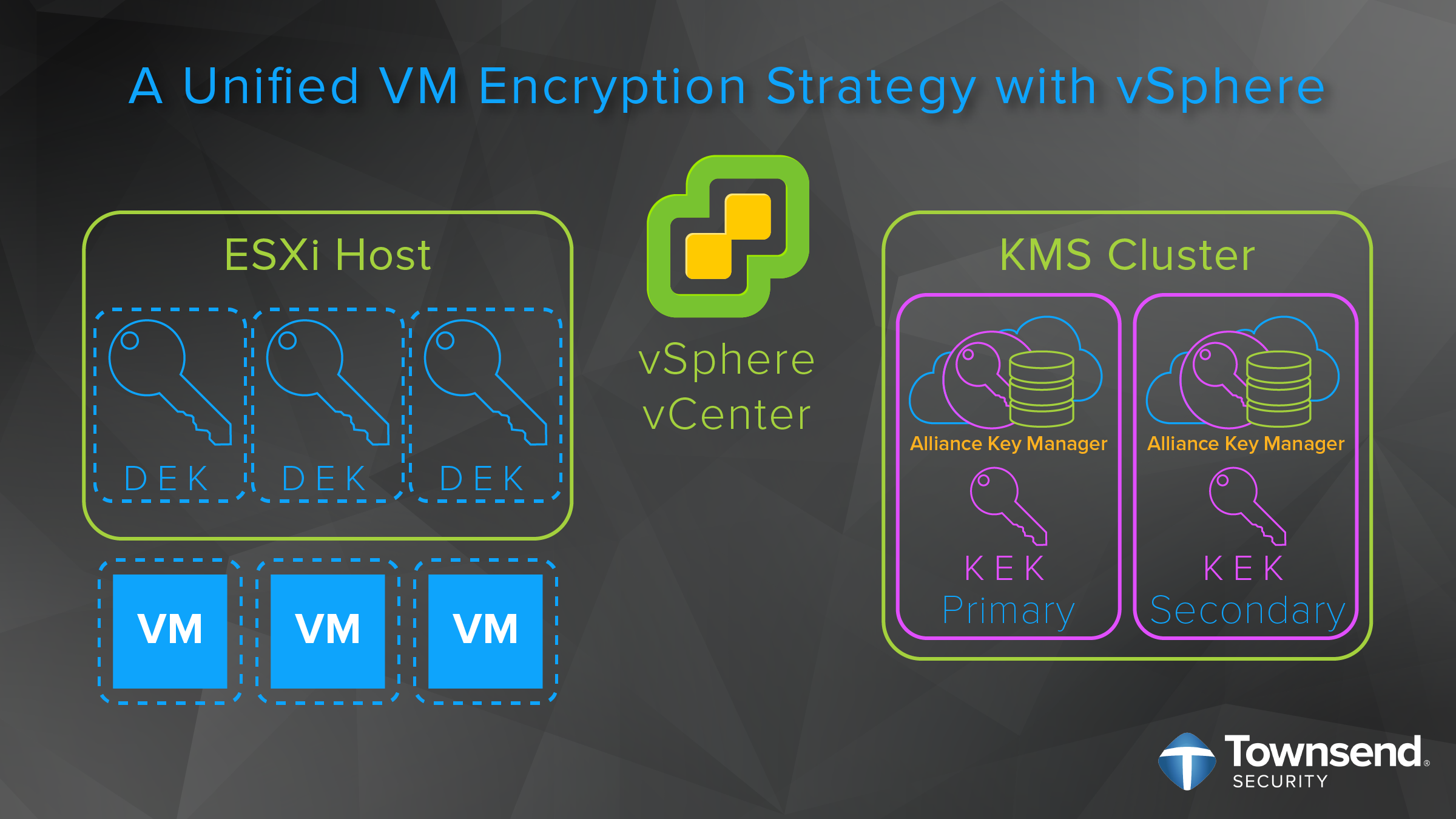

What is VMware Encryption for Data-at-Rest?

VMware vSphere encryption for data-at-rest has two main components, vSphere VM encryption and vSAN encryption. Both only require the vCenter vSphere Server, a third-party Key Management Server (KMS), and ESXi hosts to work. It is standards-based, KMIP compatible, and easy-to-deploy.

Which Encryption Option Should you Choose, vSphere VM or vSAN?

Data security is paramount for sensitive data-at-rest. Fortunately, protecting your data in VMware is relatively easy with the introduction of vSphere VM encryption in version 6.5 and vSAN encryption in version 6.6. Even better, for most folks, you won’t have to choose between each option, you will likely use both as needed. That said, there are some times when you might prefer one over the other. With that in mind, here are some of the features for each and how they are the same/different.

| vSphere VM | vSAN | |

| AES-256 encryption | Yes | Yes |

| KMIP compatibility | Yes | Yes |

| FIPS 140-2 compliant | Yes | Yes |

| Common Criteria compliant | Yes (ESXi 6.7) | Yes (ESXi 6.7) |

| centralized encryption policy management | Yes | Yes |

| Centralized encryption key management (KMS) | Yes | Yes |

| Datastore encryption | No | Yes |

| per-VM encryption | Yes | No |

| Each VM has a unique key | Yes | n/a |

| Encryption occurs before deduplication | Yes | No |

| Encryption occurs after deduplication | No | Yes |

One of the most clear cut cases on preferring one encryption option or the other is in a multi-tenant situation. VMware gives these examples:

Engineering and Finance may have their own key managers and would require their VM's to be encrypted by their respective KMS. Or maybe your company has been merged with another company, each with their own KMS. Additionally, you may have a "Coke & Pepsi" scenario of two unrelated tenants. VM Encryption can handle this use case using the API or PowerCLI Modules for VM Encryption.

Since each VM is encrypted by a different key, vSphere VM encryption may be better suited for multi-tenant situations. In this way, not only will each tenant be assured that their sensitive data is not commingled with other tenants data (separate VMs), but their data is protected by separate keys.

Since each VM is encrypted by a different key, vSphere VM encryption may be better suited for multi-tenant situations. In this way, not only will each tenant be assured that their sensitive data is not commingled with other tenants data (separate VMs), but their data is protected by separate keys.

Beyond that, VMware notes that “vSAN has unique capabilities for some workloads and may perform better in those situations.” So, if you are protecting larger datastores with a single tenant, vSAN would be your best option.

With these distinctions in mind, here is the best news: They are equally easy to set up! We have put together two videos to highlight the steps to get encryption enabled in each environment:

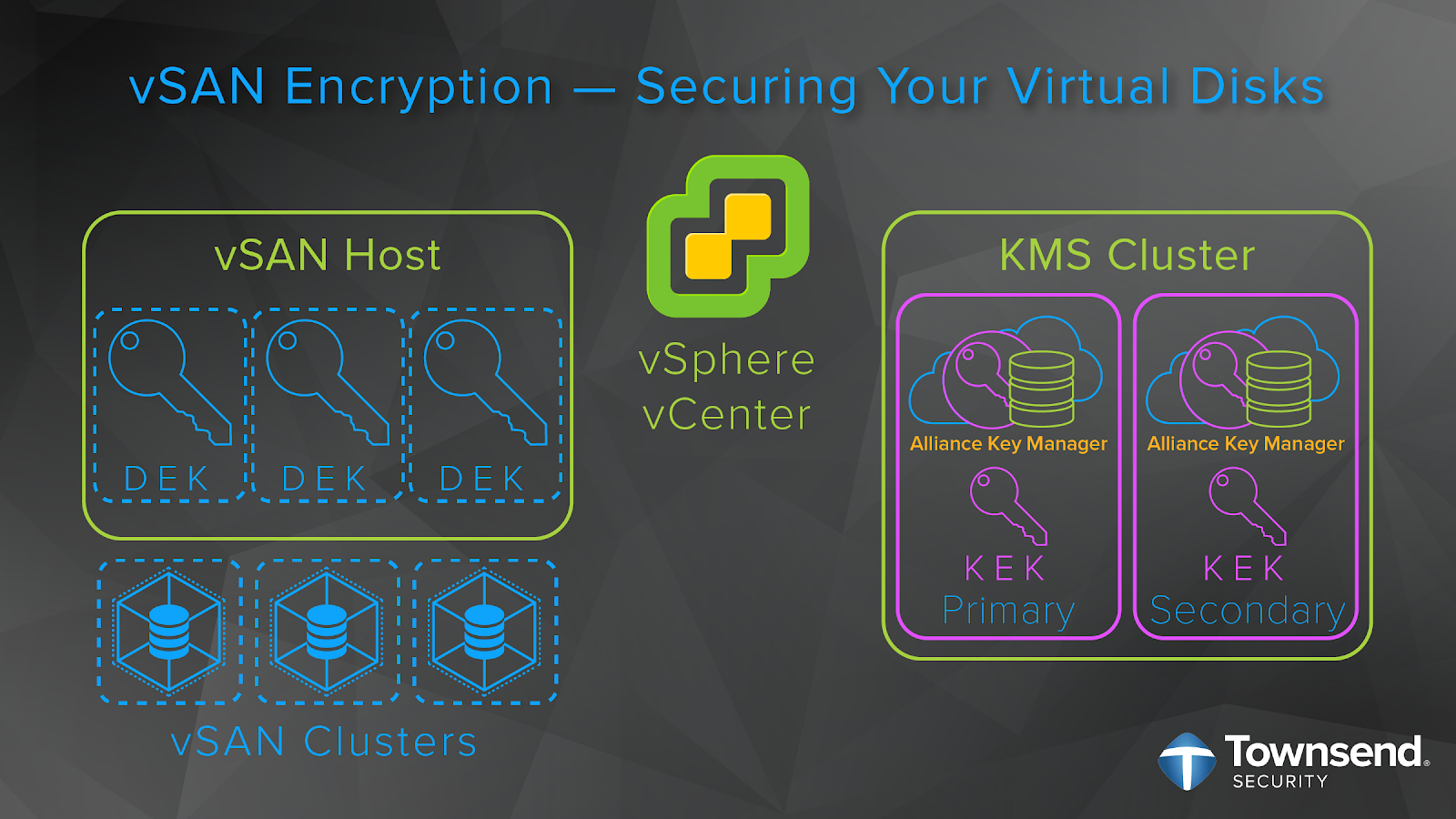

vSphere VM Encryption

For a more detailed look at vSphere VM encryption, please visit our post: vSphere Encryption—Creating a Unified Encryption Strategy. Here is a partial list of steps for enabling vSphere VM encryption:

- First, install and configure your KMIP compliant key management server, such as our Alliance Key Manager, and register it to the vSphere KMS Cluster.

- Next, you must set up the key management server (KMS) cluster.

- When you add a KMS cluster, vCenter will prompt you to make it the default. vCenter will provision the encryption keys from the cluster you designate as the default.

- Then, when encrypting, the ESXi host generates internal 256-bit (XTS-AES-256) DEKs to encrypt the VMs, files, and disks.

- The vCenter Server then requests a key from Alliance Key Manager. This key is used as the KEK.

- ESXi then uses the KEK to encrypt the DEK and only the encrypted DEK is stored locally on the disk along with the KEK ID.

- The KEK is safely stored in Alliance Key Manager. ESXi never stores the KEK on disk. Instead, vCenter Server stores the KEK ID for future reference. This way, your encrypted data stays safe even if you lose a backup or a hacker accesses your VMware environment.

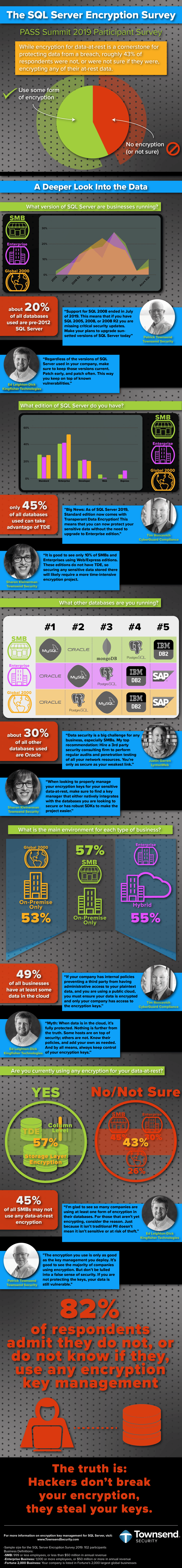

vSAN Encryption

For a more detailed look at vSAN encryption, please visit our post: vSAN Encryption: Locking your vSAN Down. Here is a partial list of steps for enabling vSAN encryption:

- First, install and configure your key management server, or KMS, (such as our Alliance Key Manager) and add its network address and port information to the vCenter KMS Cluster.

- Then, you will need to set up a domain of trust between vCenter Server, your KMS, and your vSAN host.

- You will do this by exchanging administrative certificates between your KMS and vCenter Server to establish trust.

- Then, vCenter Server will pass the KMS connection data to the vSAN host.

- From there, the vSAN host will only request keys from that trusted KMS.

- The ESXi host generates internal keys to encrypt each disk, generating a new key for each disk. These are known as the data encryption keys, or DEKs.

- The vCenter Server then requests a key from the KMS. This key is used by the ESXi host as the key encryption key, or KEK.

- The ESXi host then uses the KEK to encrypt the DEK and only the encrypted DEK is stored locally on the disk.

- The KEK is safely stored separately from the data and DEK in the KMS.

- Additionally, the KMS also creates a host encryption key, or HEK, for encrypting core dumps. The HEK is managed within the KMS to ensure you can secure the core dump and manage who can access the data.

Final Thoughts

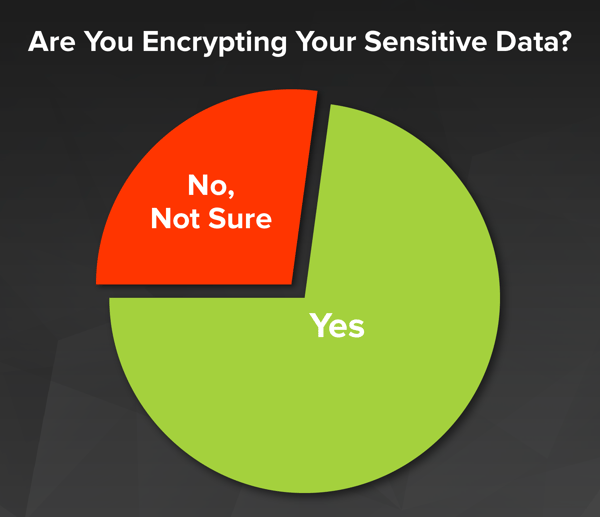

vSphere VM and vSAN encryption for data-at-rest is a powerful tool in protecting your sensitive data - for both companies and VMware Cloud Providers. It is standards-based, policy-based, and KMIP compliant. This makes it both powerful and easy to enable. While each has different strengths that make them a better choice in some situations; most of the time, it will just come down to needing to either secure data in a VM or vSAN datastore.

If you have sensitive data in VMware and are not encrypting, enable encryption today! We are happy to help.